| Can Generative AI Improve Hazard Identification? Explore the 2026 era of AI in HAZOP. Discover how Generative AI, Knowledge Graphs, and Functional Digital Twins are reducing study times by 50% while uncovering “blind spot” hazards. Read the full article. |

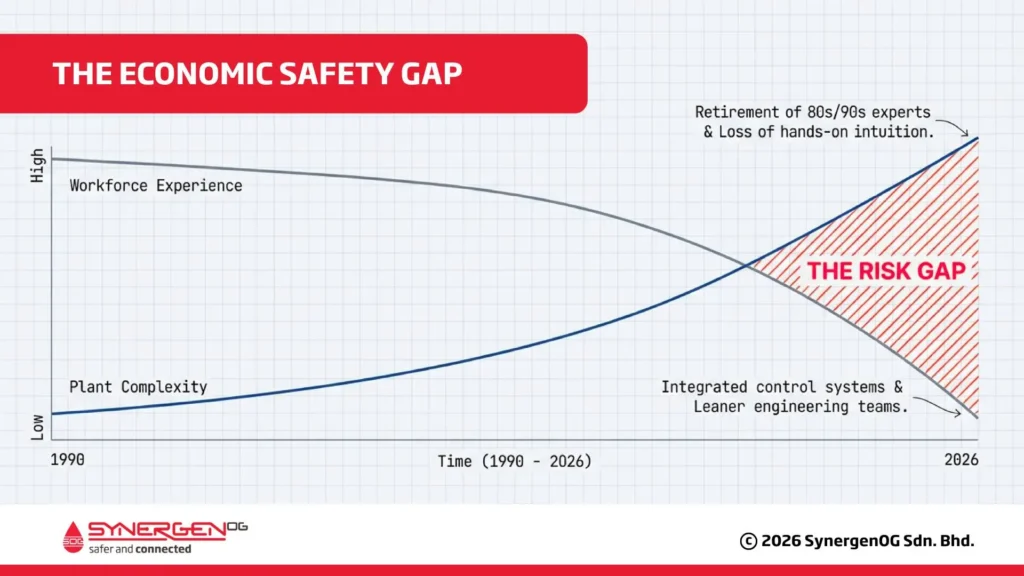

AI and the Economic Safety Gap

HAZOP has earned its place as the workhorse of process hazard analysis because it forces teams to be systematic: break a process into nodes, apply guidewords, and ask, over and over, “how could this deviate from design intent, and what happens next?” In practice, though, the quality of hazard identification varies more than many organizations admit. Some studies are crisp and defensible; others are thin, inconsistent, and hard to learn from later.

By 2026, many industries such as oil & gas, chemicals, and pharmaceuticals are facing what researchers call the “Economic Safety Gap.” Many experienced engineers who built plants in the 1980s and 1990s have retired. They took decades of practical experience with them. Now, younger engineers must manage more complex facilities, often without that same depth of hands-on knowledge.

Generative AI has entered the picture as a tool that promises to make HAZOP faster. It can draft node lists, fill in worksheets, and suggest possible causes and safeguards. But speed is not the real question. The real question is whether AI can make HAZOP more complete and more reliable, without creating new risks like invented scenarios or missing real hazards.

The answer appears to be: yes, but only with strong human oversight. AI should support the team, not replace it. HAZOP must remain a human-led activity.

What “good” HAZOP hazard identification looks like

A HAZOP is a structured, team-based review intended to identify hazards and operability issues by examining deviations from design intent. The workflow is familiar: define scope and assumptions, split the process into nodes, then for each node apply guidewords (e.g., No/More/Less/Reverse/As well as) to process parameters (flow, pressure, temperature, level, composition).

Each deviation is developed into credible causes, consequences, existing safeguards, and recommendations/actions. Standards guidance (e.g., IEC 61882) emphasizes that the discipline is in the method and documentation, but the value comes from multidisciplinary judgment in the room.

When practitioners say “a high-quality HAZOP,” they usually mean more than “a lot of rows in a worksheet.” Useful quality dimensions you can actually evaluate include:

- Completeness/coverage: credible deviations explored across nodes and operating modes.

- Scenario validity: causes/consequences are physically and operationally plausible for this

- Safeguard relevance and diversity: protective layers aren’t just procedural; they reflect engineered, instrumented, and administrative controls where appropriate.

- Traceability: each claim ties back to process safety information (PSI), P&IDs, design intent, and applicable standards.

- Reproducibility/consistency: another competent team can follow the logic and reach comparable conclusions.

Why hazard identification quality fails in practice

Even experienced teams miss scenarios, and the failure patterns are remarkably consistent across industries. Time pressure compresses discussion. Documentation varies by facilitator, business unit, or contractor. Specialty knowledge (controls, corrosion, rotating equipment, relief systems, human factors) may be missing for critical nodes.

Consequences can be under-elaborated (“fire/explosion” with no escalation path), which hides risk-driving assumptions. And teams often lack rapid access to analogous incidents or prior HAZOP learnings, especially across sites and corporate history.

In short, HAZOP takes time and resources. Important scenarios are often overlooked. Risks may be underestimated because consequences are not fully developed. Investigation reports frequently point out weaknesses in process hazard analysis.

This is exactly the problem AI developers are trying to address.

What AI in HAZOP means in 2026 (automation vs augmentation)

In 2026, AI-assisted HAZOP usually means applying AI, often large language models (LLMs) plus retrieval, to support parts of the HAZOP lifecycle:

- Preparation (scope drafts, node lists),

- Prompting (suggesting deviations/causes to consider),

- Incident retrieval (finding similar events and good practice),

- Transcription and summarization during workshops,

- Post-workshop consistency/quality checks.

However, the responsibility must remain clear:

The HAZOP team owns the study. AI provides suggestions, not decisions.

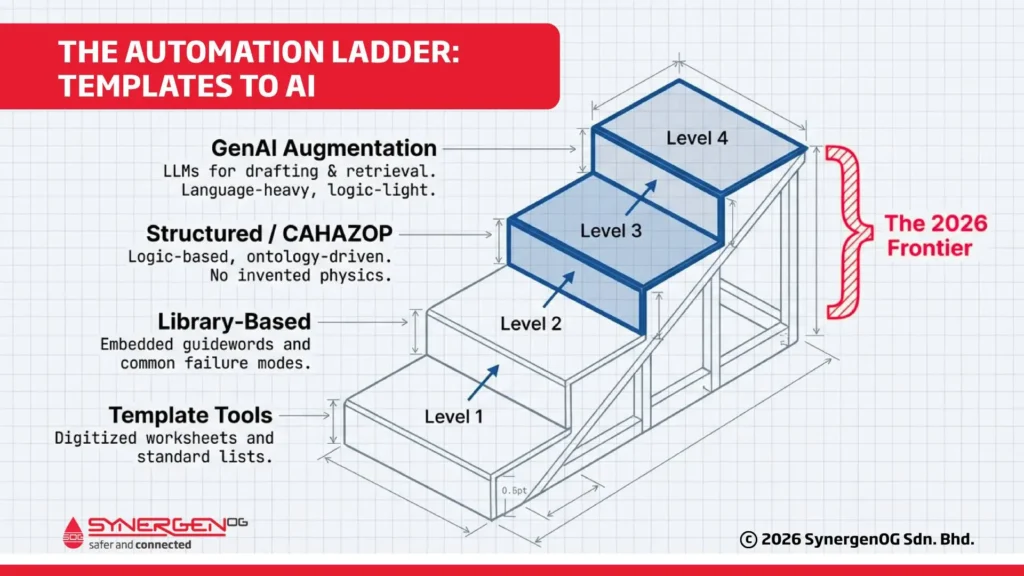

AI tools today fall into different levels:

- Template tools: digitize worksheets and reporting.

- Library-based tools: embed guidewords, standard deviations, and common failure modes.

- Structured reasoning / CAHAZOP (Computer-aided HAZOP): use knowledge representations (ontologies, qualitative propagation) to support completeness and causal structure.

- GenAI augmentation: uses LLMs for language-heavy tasks (drafting, summarizing, retrieval, cross-checking) and more cautiously for suggesting scenarios.

That “bridge” matters: classic computer-aided HAZOP approaches rely on structured knowledge and propagation logic to avoid inventing physics. In practice, GenAI tends to be most defensible when it is grounded in that structured plant context rather than improvising from generic training data.

What Research Shows: Can GenAI improve hazard identification quality?

The most reliable evidence comes from peer-reviewed studies.

One 2026 study tested four AI models by asking them to create HAZOP worksheets from the same P&ID. The models produced text that looked similar to expert-written HAZOPs. But when experts checked the technical accuracy, only 19–37% of scenarios were truly valid. Many safeguards suggested were procedural rather than engineering-based.

The authors’ conclusion is the practical one: LLMs can serve as supportive tools, not replacements for expert-led HAZOP.

A separate 2026 Safety Science review on benchmarking (“From hallucinations to hazards”) argues that standard LLM benchmarks miss what safety-critical work needs: performance consistency. In a pilot study across safety-critical scenarios over three runs, the paper reports volatility: response success rate might be stable, but analytical quality varied between runs, with hazard identification scores shifting and causal reasoning poor and unpredictable. This is a key governance implication for HAZOP: if a tool’s outputs drift run-to-run, you need QA gates and acceptance workflows that assume variability.

Industry tool evaluation work also signals where the market actually is. An IChemE Hazards paper evaluating AI-assisted HAZOP software tools categorizes tools into template-based, library-based, and AI-based, and explicitly flags challenges that hinder effective automation, hallucination, reduced accuracy, time consumption, high costs, and weak P&ID integration. It also points toward the direction vendors are chasing: better predictive capability driven by historical P&IDs and historical HAZOP data.

Finally, industry conference activity (e.g., petroleum/chemicals) shows active development of AI-powered HAZOP/PHA frameworks aiming for more uniform outcomes and improved efficiency. Treat this as a signal of adoption interest and reported approaches, not proof of safety performance.

Practical GenAI use cases across the HAZOP lifecycle

If you want quality improvements (not just faster paperwork), focus on where GenAI is naturally strong: language, retrieval, and consistency enforcement, and be conservative where it is weak: plant-specific causal truth.

Before the Workshop

AI can help with preparation by:

- Drafting scope documents.

- Suggesting node lists.

- Creating worksheet templates.

- Generating lists of missing process safety information.

But these outputs must be checked carefully against approved documents.

During the Workshop

AI can act as a “copilot” by:

- Transcribing discussions clearly.

- Writing structured consequence statements.

- Notifying the team when safeguards are missing.

- Retrieving similar past incidents to challenge assumptions

However, only humans can judge whether a scenario makes sense in the actual plant.

Human oversight is essential.

After the Workshop

AI can improve quality control by:

- Finding missing fields.

- Flagging duplicated or inconsistent safeguards.

- Highlighting the overuse of procedural controls.

- Summarizing changes during revalidation studies.

This is also where AI can help enforce corporate learning, making it harder for teams to silently repeat the same omissions year after year.

Where structured CAHAZOP still wins

For completeness and causal reasoning, structured approaches (ontologies, propagation) remain valuable because they encode relationships unambiguously and can systematically explore propagation paths. Ontology-based HAZOP automation research emphasizes that result completeness depends on the quality and completeness of the underlying knowledge representation, exactly the opposite of “just prompt the model and hope.” In practice, GenAI is best used to translate, draft, and retrieve around that structure—not invent technical truth.

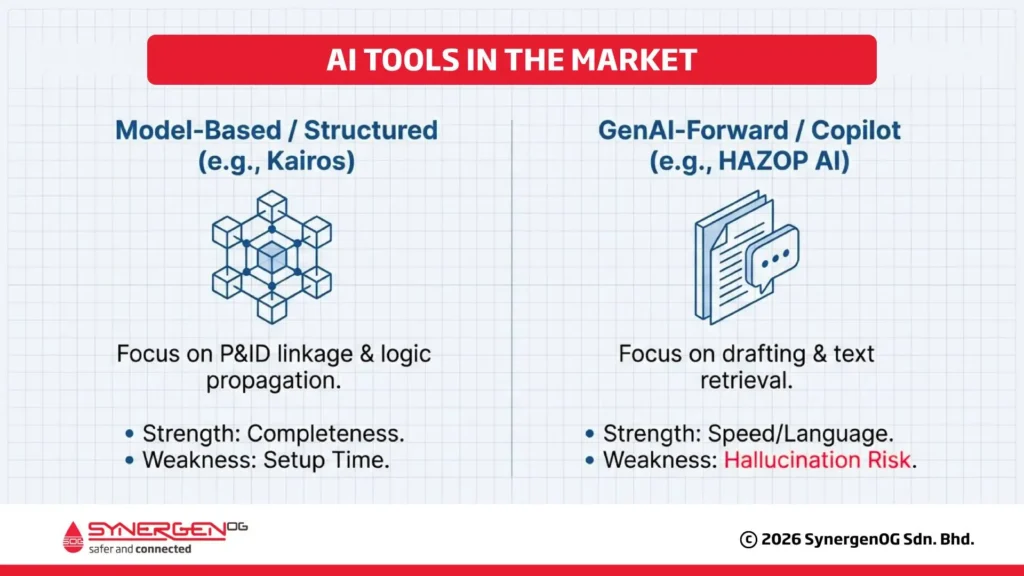

Tools in the market (examples) and what they imply for 2026 capability

The tool landscape makes more sense when you map products to the automation ladder rather than treating “AI” as one thing.

Model-based / digital HAZOP (structured automation): Kairos Technology’s HAZOP Assistant markets coverage features such as automatic guideword coverage, propagation views from initiating event to consequence, P&ID-linked visualization, and automatic report generation. Conceptually, this is closer to “structured reasoning + visualization” than pure GenAI prompting. (kairostech.no)

GenAI-forward “copilot” style: HAZOP AI positions itself as a safety copilot, with goals such as worksheet pre-population, drafting terms of reference, extracting from P&IDs/documents, highlighting missed items, and incorporating incident learnings. These are plausible augmentation targets, but they are product claims—you still need to validate performance on your process context and your PSI. (HAZOP AI)

What IChemE’s categories imply: The Hazards evaluation explicitly distinguishes between template-based, library-based, and AI-based tools and notes that “AI integration” varies widely in scope and reliability. For buyers, this is the key procurement takeaway: don’t compare “AI HAZOP tools” as a single category, compare where they sit on the ladder, what inputs they require, and what failure modes they introduce.

Risk, governance, and compliance expectations for using GenAI in HAZOP

GenAI in HAZOP is safety-relevant software. Treat it like you would treat any safety-impacting change: identify failure modes, implement barriers, verify performance, and control changes.

Failure modes that matter in HAZOP

The safety-relevant risks are now well documented:

- Hallucinated causes/consequences (plausible text, wrong reality).

- False negatives (missed scenarios) masked by high textual similarity.

- Safeguard bias toward procedural controls and weak diversity of protection layers.

- Run-to-run volatility in analytical quality, even for the same version.

- Misinterpretation of plant-specific safeguards or operating modes.

P&ID-to-HAZOP automation is particularly high-risk when the extraction layer is weak. Tool evaluations explicitly call out time consumption when analyzing equipment across P&IDs and limitations in integrating graphical P&ID representations, meaning errors can enter before the model even “reasons.”

Conclusion

In 2026 and beyond, AI in HAZOP can improve hazard identification quality primarily by enforcing consistency, accelerating retrieval of relevant learning, and reducing documentation friction, especially through HAZOP augmentation rather than full automation.

But research shows scenario validity and safeguard quality remain limiting factors, and benchmarking work highlights volatility as a safety-critical concern. The winning pattern is human-led HAZOP with grounded, validated AI support, governed like any other safety-impacting system.

In the future, HAZOP may become more dynamic.

Instead of updating studies every five years, plants may move toward real-time risk dashboards. AI could monitor live sensor data and flag deviations continuously — almost like running a 24/7 HAZOP.

AI may also integrate automatic LOPA calculations and determine required Safety Integrity Levels immediately.

If this happens, the role of the Process Safety Engineer will shift. Engineers will spend less time facilitating brainstorming and more time verifying automated analyses and ensuring system integrity.

But even in this future, one principle will remain unchanged:

Safety accountability belongs to humans.

References:

- https://engineering.purdue.edu/P2SAC/presentations/documents/1.HAZOPAutomationandAugmentationandAI.pdf

- https://www.aiche.org/ccps/resources/glossary/process-safety-glossary/hazard-and-operability-study-hazop

- https://www.kairostech.no/hazop-assistant

- https://engineering.purdue.edu/P2SAC/presentations/documents/1.HAZOPAutomationandAugmentationandAI.pdf

- https://www.sciencedirect.com/science/article/pii/S0925753525002814

- https://pmc.ncbi.nlm.nih.gov/articles/PMC7581379/